Memorial Continuum

Date

July 2021

Teammates

Tang Sheng

My Role

Ideation

Physical Computing

Virtual Space Design

Graphic Design

Tools

Raspberry Pi, TSNE, Node-Red, IBM Watson, Python Automatic Web Crawling, MQTT Protocol, Point Cloud, Unity, Blender

Project Brief

Spoken verbal communication is an integral part of our lives and identities, but due to its ephemeral nature, the content easily fades away, leaving traces of certain labeled impressions in our memory storage.

In this project, a custom-made speech-to-text device was created with the intention to capture spoken content from the participants and generate associated visual elements that are being displayed in a three-dimensional virtual space.

The work invites us to consider how computational systems, machine intelligence, and telematic and virtual spaces allow us to experience the materialization and permanence of spoken communication, and, in addition, contemplate its effects on public space and collective memory, as well as navigation and comprehension of its archives.

Background Research

One of the inspirations of the project is that NYU Shanghai will soon move from its old Century Avenue Campus to the new Qian Tan Campus, and this will be my last summer staying in this academic building. There are tons of great memories that happened in this location. So I come up with the idea that how the words people have said (because their content is the easiest one to fade away, nevertheless influence our daily behavior a lot) will become a part of our collective memory towards this academic building. So building a real-time word-generating device and then displaying them in a virtual space becomes our research direction.

Century Avenue Campus

Qian Tan Campus

In order to achieve what we have proposed above, we have first drawn a flow chart to make it clear what we should do at each step.

Node-Red (IBM Watson)

Microphone

Raspberry Pi

Convert to a WAV file

Speech to Text

Natural Language Understanding

Upload to DataBase

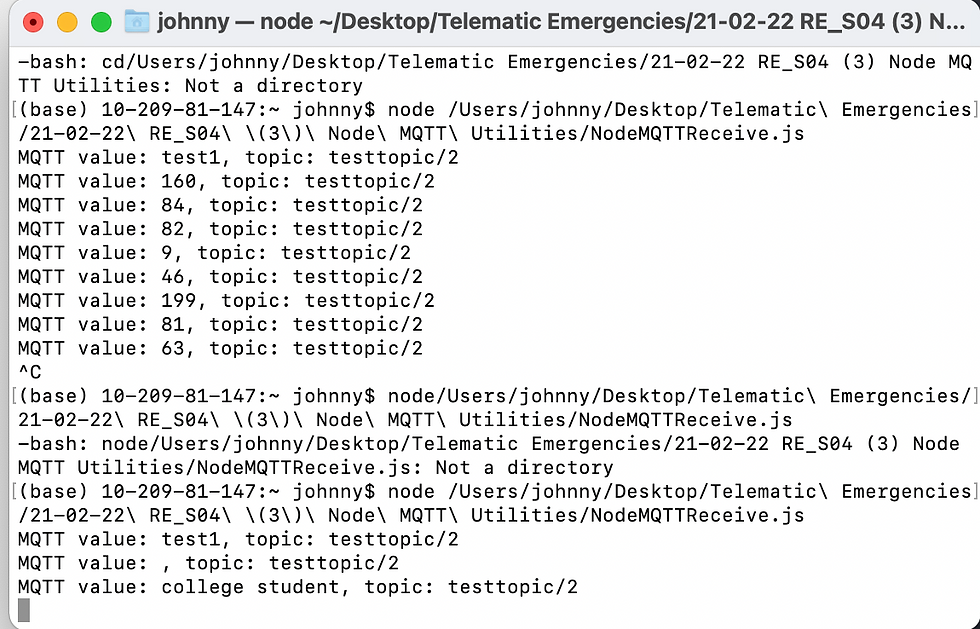

Use the MQTT protocol to send data to the Mac Terminal

Inside the Database

Audio1.wav

Keywords: XXXXX

Topic: XXXXX

Relevance: XXXXX

Audio2.wav

Keywords: XXXXX

Topic: XXXXX

Relevance: XXXXX

Audio3.wav

Keywords: XXXXX

Topic: XXXXX

Relevance: XXXXX

Cloudant

Python Automatic Web Picture Crawling

Text Information

Unity + TSNE

Display

Fabrication

The first step is to make sure that the physical installation has a shell structure to protect the Raspberry Pi and other electronic components. To do that, we design the outlook of the whole device. Besides that, we purchase an external storage battery to provide the energy and a fan to lower the temperature near the CPU to prevent it from burnt out in summer.

The second step is to design the outlook of the audio-generating device. We are inspired by the famous French dessert 'soufflé'. Its appearance reminds us of the suppleness feature of the words themselves, which means capturing a word doesn't necessarily mean anything, you need to find the right context to know what it stands for. Also, since we need to put a microphone in the middle of it, the cylinder structure seems to provide the least disturbance compared with other regular shapes, like squares, pyramids, etc.

The last step is to make people aware that they are being listened to. The privacy issue always exists when we are trying to grab data from the public. So we have to make the device very "noticeable" to make people aware of its existence. To do that, we imitate the JBL Pulse 4 to let the device has a nice effect of light whenever it's turned on.

Raspberry Pi

Following the flow chart above, there are mainly two things we have to do inside the Raspberry Pi, turning the real-time audio into a WAV file, and processing this on the Node-Red platform utilizing the IBM Watson.

To create the WAV files, we directly code them inside the Linux Terminal. Since there are no point in recording words when there is no one around, also for the sake of computationally available, we manually set a noise threshold, whenever the audio around the device is larger than that, it will begin to record. As soon as the surrounding is quiet, lower than the threshold, it will stop recording and upload the file to the Node-Red.

Then we utilize the machine intelligence IBM Watson to process the uploaded WAV files. Firstly use the "Speech to Text" to transform audio files into string materials. Secondly putting the texts into the "Natural Language Understanding". As shown in the picture, we can generate some important features of this material, including keywords, topics, and relevance. At the very end, we will push these key elements to the MQTT protocol so that no matter where the device is, our computer can always get information.

Python Automatic Web Crawling

With the given topic sent from the MQTT, a more direct way to show what people are talking about is to utilize the picture. So we program some Python codes to make this process automatic. Pictures downloaded by using the Google API will be stored in a folder and will be named after by their name and relevance. These pictures will be later sent to the Unity engine and the TSNE model, which will determine their XYZ position and their lifetime in the virtual space.

TSNE is essentially a dimensionality reduction method that is used to visualize in a relatively low and understandable dimension. We utilize this technology to help us locate the pictures geographically in the virtual space we create in Unity.

Virtual Space Design

Since the project is about the relationship between verbal communication and collective public memory. Instead of using traditional photography techniques to scan and create the model of certain places in the academic building, we use the point cloud to do it because it can create a sense of patchwork, which perfectly matches our idea of collective memory. As for choosing the specific locations, we do a quick survey among students and eventually decide to scan the cafeteria and IMA studios.

Before we combine all the stuff we have done into Unity, we decide to first test whether the serial communication and the data transmission process are working smoothly. So we build a demo scene in Unity, using emotion to control the intensity and moving speed of the particles. The result turns out to be perfect and this gives us confidence that our project will work fine as well.

Here are the final result of pulling every single piece of the project together. We are able to grab the topic people are talking about and put it into the virtual space in the form of text and pictures. Just as we cannot remember what we have said several minutes ago, these collective memories may exist longer in the virtual space. But eventually, they will be gone and replaced by other words. It invites us to consider how computational systems, machine intelligence, and telematic and virtual spaces allow us to experience the materialization and permanence of spoken communication, and, in addition, contemplate its effects on public space and collective memory, as well as navigation and comprehension of its archives.

Exhibition & DURF Symposium

After finishing several rounds of user tests, we set it up near the on-campus cafeteria to hold an exhibition here. Some audience provides us with feedback that in most cases, they seldom paid attention to their talks in a relatively public place, especially with someone that they are familiar with. However, after seeing this project, they began to pay more attention to this field and reflect on their relationships with the collective memory in a public place.

At the 2021 Fall DURF Symposium, we share our research conclusion and the project with other faculty members at NYU Shanghai in the form of an academic poster. We have received critical comments and feedback helping us to improve the project.

Reflection

Generally speaking, based on the feedback and comments we generate from the exhibition and the symposium, the project is successful. However, if we have more time, we probably will reconsider several important issues to improve our project.

Firstly, people often won't stay for a very long time in public spaces like cafeterias. They might just want to grab a morning coffee. In this way, they might be a part of the collective memory, but they will never be aware of that since it often takes the "Natural Language Understanding" several times to produce the related content, which means they will never have a chance to see the result.

Secondly, it might be a better way to transmit the data from a local wifi sandbox rather than a cloud database because it's more efficient and we don't really need to look up what people have said several weeks ago.